OpenAI is turning a brand-new page in its global strategy. As AI models grow in capability, they often bring higher cloud costs and increased latency. Responding to the industry’s demand for faster, more cost-effective, yet intelligent solutions, OpenAI officially announced its most capable small-scale models to date: GPT-5.4 Mini and GPT-5.4 Nano, effective March 17, 2026.

GPT-5.4 Mini: The Perfect Balance of Speed and Power

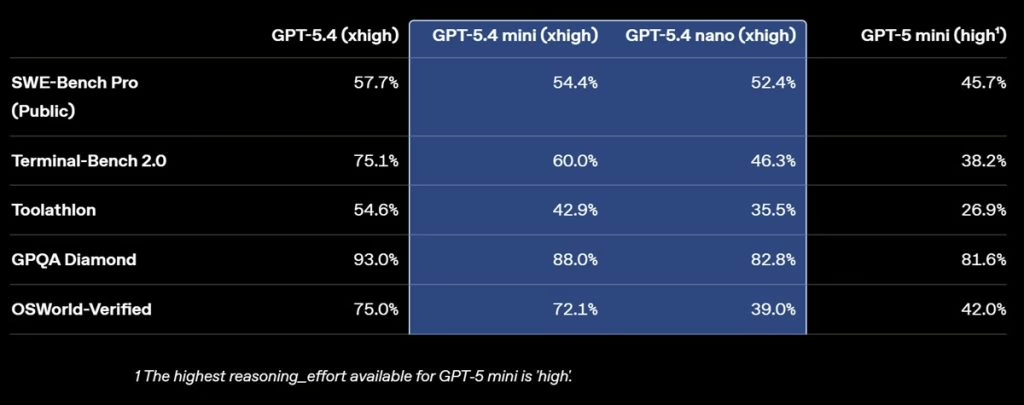

The standout of the announcement is undoubtedly GPT-5.4 Mini. Built upon the core architectural strength of the flagship GPT-5.4, this new version is specifically optimized for high-volume, repetitive workloads. Compared to its predecessor, GPT-5 Mini, it offers a massive leap in coding, logical reasoning, multimodal understanding, and external tool utilization.

Remarkably, it operates exactly twice as fast as the previous generation. In rigorous autonomous benchmarks like SWE-bench Pro and OSWorld-Verified, the Mini model challenges the performance of the full-scale GPT-5.4, even surpassing it in specific tasks involving accuracy and citation grounding. This positions it not just as a “budget alternative,” but as a primary actor for enterprise software and complex agentic systems.

The Star of Efficiency: GPT-5.4 Nano

The smallest and most budget-friendly member of the family, GPT-5.4 Nano, is tailor-made for background processes where milliseconds and cost-efficiency are vital. Building on the foundation of GPT-5 Nano with a significant performance overhaul, this version is recommended for:

- Instant classification of massive datasets.

- Data extraction from unstructured text.

- Ranking algorithms.

- Managing subagents that handle simpler support tasks.

Developers and enterprises will no longer need to shoulder the processing power—or the bill—of flagship models for straightforward data labeling or routing tasks.

What Does This Mean for the Future of Apps?

OpenAI’s move completely dismantles the misconception that “the biggest model is always the best for every task.” As emphasized in the official release, for coding assistants that must react instantly or autonomous systems analyzing screenshots in seconds, the “best” model is the fastest and most reliable one, not necessarily the largest.

With GPT-5.4 Mini and Nano now accessible via API, we are set to experience AI applications with nearly zero wait times. Would you prefer a slightly less “intelligent” but instantaneous AI assistant, or are you willing to wait for the flagship’s depth? Share your thoughts in the comments.